Async HQbird replication

How to setup Firebird asynchronous replication with HQbird Enterprise in a distributed environment: network share or FTP/SSH

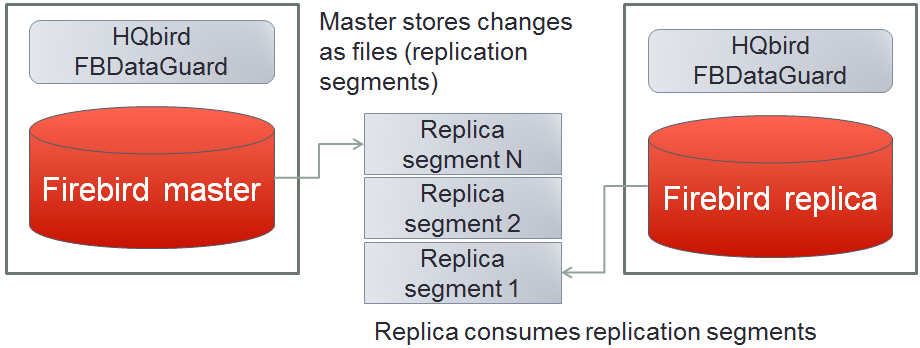

The asynchronous replication in HQbird is the easiest way to create a mirror (also called warm-standby) copy of the production Firebird database. In this case, Firebird on the master server stores all changes into the files, called replication segments, and Firebird on the replica server consumes these segments – i.e., it inserts changes into replica database.

If master and replica Firebird are on the different servers, it is necessary to transfer replication segments from master to replica. There are 2 main ways to do it: network share or FTP/SSH.

How to transfer HQbird replication segments between master and replica via embedded HQbird FTP

"Transfer Replication Segments" in HQbird

In a case of distributed environment, when the network connection between master and replica server is unstable, or with high latency, or when servers are in the different geographical regions, the best way to transfer replication segments will be through FTP or SSH.HQbird has the ability to transfer replication segment files from the master server to remote server: it has embedded FTP client and server to organize transfer without additional tools.

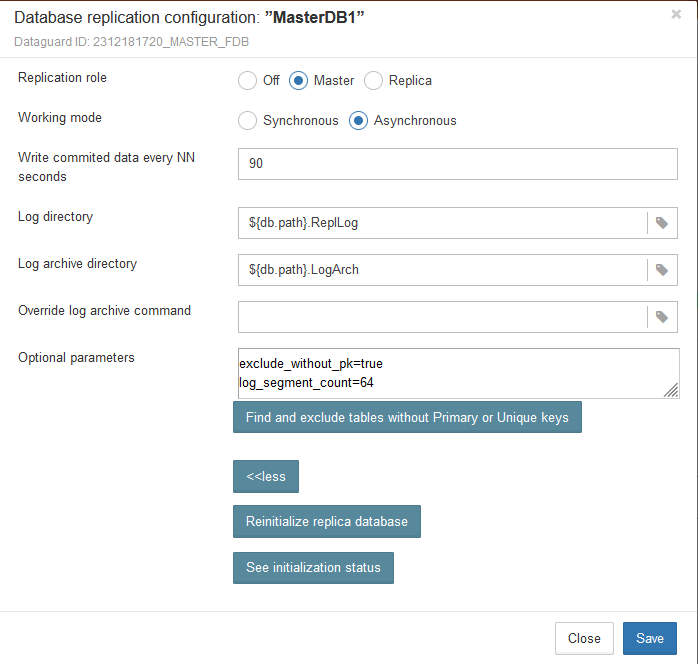

By default, when you will enable the replication as Master-> Asynchronous, HQbird will configure it to save replication logs in 2 folders in the same folder where database resides. HQbird will create these folders automatically when you click Save.

These folders are referenced with the macros ${{db.path}, which specifies the path to the database:

Click Save to create configuration for the master replication. To actually start replication, restart of FirebirdHQbird... service will be required.

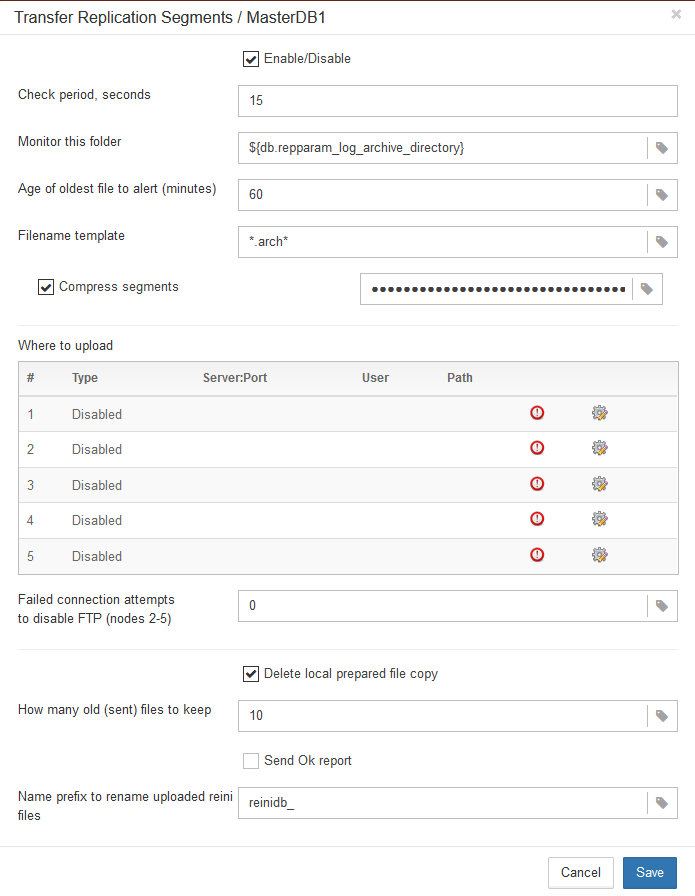

After that, we can configure Transfer Replication Segments job to monitor the folder for the new replication segments and upload them to the remote FTP server - see on the screenshot below.

Parameters:

- «Check period, seconds» - how often to check for the new segments

- «Monitor this folder» - the job checks folder, specified in «Monitor this folder». As you can see, the folder is referenced through macros ${db.repparam_log_archive_directory}, which automatically put to this field the path to LogArch folder.

- «Filename template» - job looks for files with filenames according to the mask in «Filename template». As you can see, the folder is referenced through macros ${db.repparam_log_archive_directory}, which automatically put to this field the path to LogArch folder.

- "Age of oldest file to alert (minutes)" - if HQbird will see segment older than specified interval (60 minutes by default), it starts to warn that transfer is delayed or too slow ot it has another difficulties.

- Compress segments - by default, Transfer Replication Segments job compresses and encrypts replication segments before they sent. HQbird creates the compressed and encrypted copy of the replication segment and uploads it to the specified target server.

- Failed connection attempts to disable FTP - how many times HQbird will try to send segments until the transfer attempts will be stopped. By default 0, it means never stop

- How many old (sent) files to keep - by default, HQbird will keep 10 last sent files, and then rotate

- Send Ok report – send an email to the specified in Alerts address every time when replication segment is uploaded. By default, it is off.

- Name prefix to rename uploaded reini files - service field, it is used for the automatic reinitialization process. Do not change it.

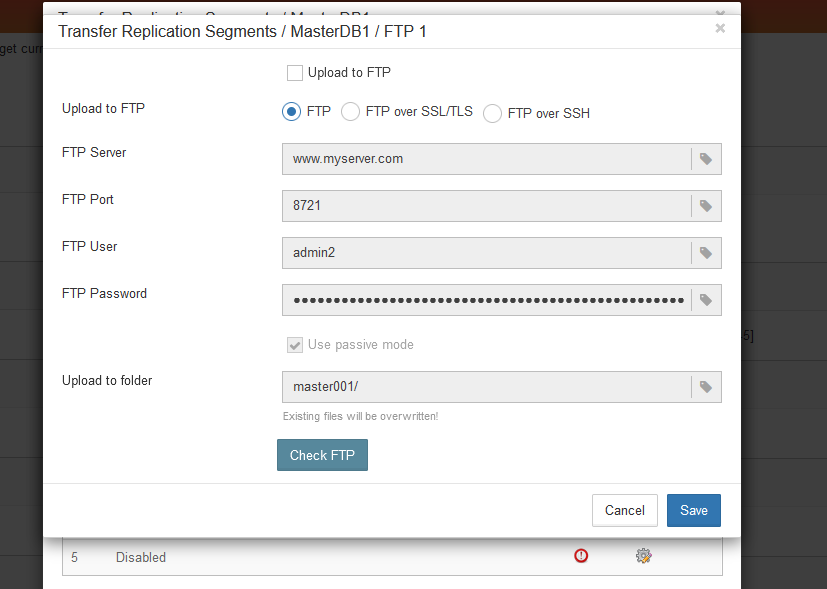

There are several types of target servers: FTP, FTP over SSL/TLS, FTP over SSH. When you select the necessary type, the dialog shows mandatory fields to be completed. The embedded FTP is standard one.

There could be up to 5 destination FTPs:

Note: FTP server is embedded in HQbird, it should be just enabled on replica side.

File Receiver

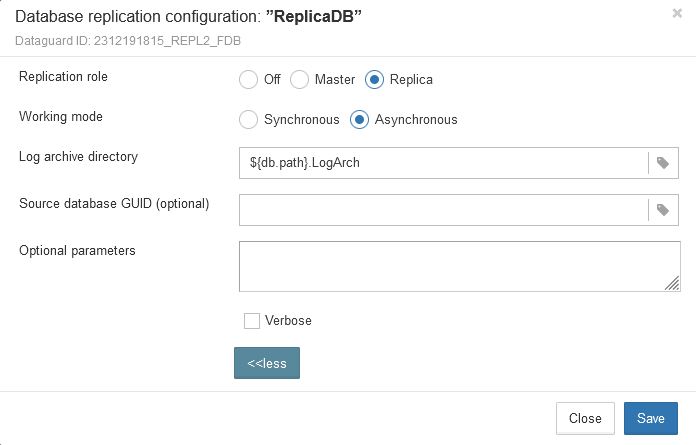

To receive, decompress and decrypt received files into the regular replication segments, another instance of HQbird should be installed on the replica server. Let's consider how to configure it.First, configure the replica database. For this, click on the replication settings dialog in the header pf the database, and choose Replica -> Asynchronous.

Other parameters in this dialog will be pre-filled automatically:

Click Save in this dialog. Remember, to actually apply changes, it will be necessary to do restart of Firebird (or reconnect all users at once, which is essentially the same).

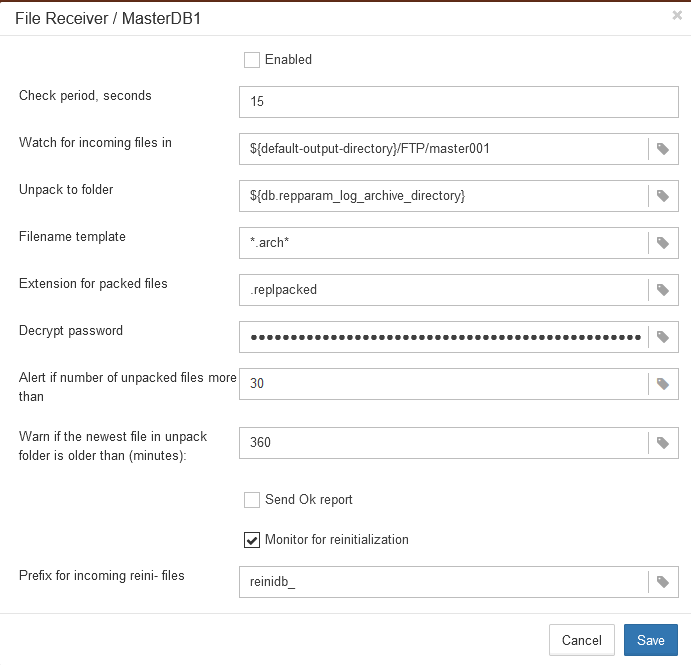

Then, open widget File Receiver on replica.

File Receiver checks files in the folder specified in «Monitor directory», with an interval equal to «Check periods, minutes». Its checks only files with specified mask (*arch* by default) and specified extension (.replpacked by default), and if it encounters such files, it decompresses and decrypts them with the password, specified in «Decrypt password», and copies to the folder, specified in «Unpack to directory».

There are the following additional parameters:

- Remove packed files after unpacking – by default is On. It means that FBDataGuard will delete received compressed files after successful unpacking.

- Send Ok report – by default is Off. If it is On, FBDataGuard sends an email about each successful unpacking of the segment.

- Perform fresh unpack – disabled by default. Cloud Backup Receiver remembers the last number of replication segment it unpacked. If you need to start unpacking from scratch, from segment 1 (for example, after re-initialization of replication), enable this parameter. Please note that it will automatically become disabled after the resetting of the counter.

How to transfer HQbird replication segments between master and replica via network share

If you have Firebird master and replica running on the servers in the fast local network (1Gbit recommended), probably the best way will be to use a network share to transfer replication segments. Let's consider how to setup it properly.First, you need to decide where to setup network share to store asynchronous - it could be 1) on the master, 2) on the replica, or 3) at some third location.

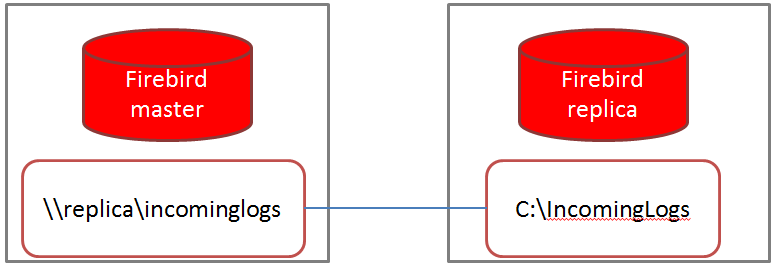

Logs stored on the replica

Replication mechanism on master writes asynchronous segments to the specified location, and if it such location will become unavailable, replication will be stopped, and then operations will be stopped too.

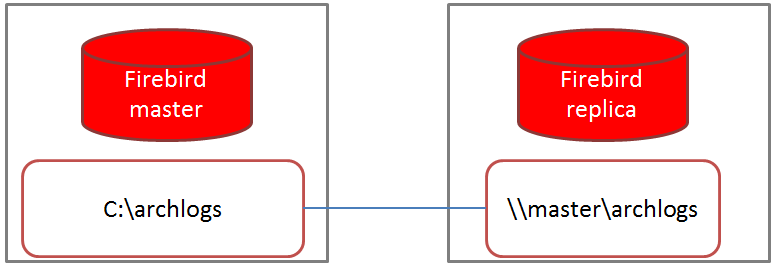

Log stored on the master server

There is a better configuration in the case when the network share is created on the master and mounted on the replica server. In this case, replica reads replication segments from the network share, and if share becomes unavailable, replica server will stop updating the database, but it will be available for read-only requests. When network share will become available again, the replica will resume import of the replication segments.

Logs stored in the third location

The third option, when the network share is created on the third server or network storage, is the combination of the 1st and 2nd options.Conclusion

The obvious option is to store replication segments at master in the local folder and share them with replica through network share mounted to the replica.Options for storing segments on the replica server (or in another location) are less convenient because master server will depend on the availability of the network share.

User rights for Firebird to access network shares

Second, you need to make sure that Firebird has enough rights to access the network share where logs are located. Normally Firebird service is running as Local System on Windows, and as firebird user on Linux.

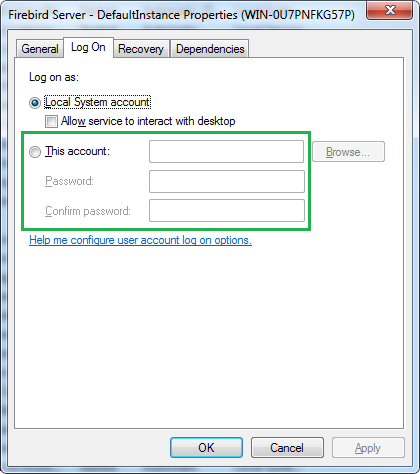

On Windows, the easiest way will be to specify another account, with enough rights to access network shares. Something like domain admin could be a good choice. Use applet Services (run services.msc), open properties of FirebirdHQbird... service and assign new user on tab Log On. Restart Firebird service for these changes to take effect.

If master and replica servers are in different Windows domains, setup will require non-trivial tuning of security settings for the network share. In the case we simply recommend to use FTP approach.

On Linux situation is a bit more difficult. Even if master creates replication segments files as firebird user, and replica server also runs as firebird, the security pids of both firebird users should be identical to allow access of Firebird to replication segments on the mounted network share. If you don't know how to setup mounting properly, choose FTP instead.

So, choose what option you prefer from the above options (logs stored on the replica, on the master or at third-party location), and setup it accordingly.

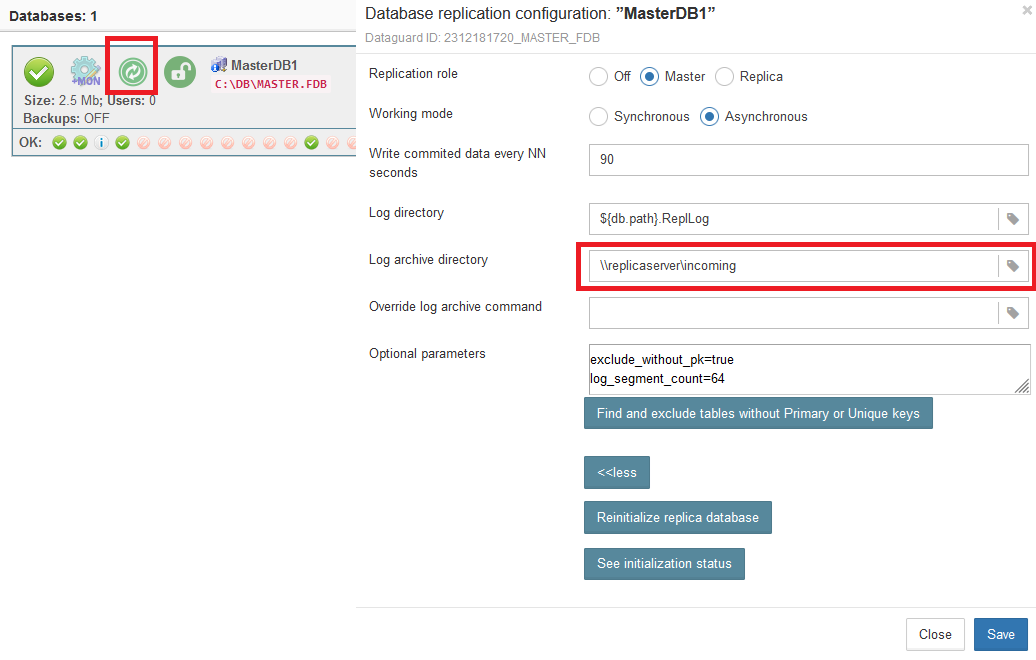

For logs stored on the replica, you need to open replication setup dialog at master server and set parameter «Log archive directory» to the local folder which is exposed as a network share, and restart Firebird to apply new settings.

After that Firebird on the master will start to create replication segments files on the specified share. Firebird on the replica server should be configured to read these segments from the local folder.

For the option "Logs on the master" (recommended), setup master to store replication segments to the local folder, expose it as a network share, and configure replica to read logs from that share.

Pros

- Easy to configure in the typical local network

- Requires fast and stable network connection (from 1Gbit)

- Not applicable for distributed environments

- Segments are transferred through network as is, not compressed, not encrypted

Summary

As you can see, it is easy to setup asynchronous replication between master and replica either with network share or with FTP/SSH.Please feel free to contact us with any questions: [email protected].

en

en br

br