Library

Tuning 1.7 Terabyte Firebird SQL database

Alexey Kovyazin, 14-July-2014

As you remember from our previous articles, we have investigated myths about Firebird performance degradation (http://ib-aid.com/en/articles/firebird-performance-degradation-tests-myths-and-truth/), where we have created several databases from 9Gb to 30Gb, and also tested very large Firebird database (1.7 terabyte) (http://ib-aid.com/en/articles/more-details-about-1-7-terabyte-firebird-sql-database/).

All tests were taken on the same hardware, which is kind of low-budget configuration: CPU AMD-FX8350, RAM 16GB, SATA software RAID1 2x4Tb HDD Seagate drives, Operation System Windows Server 2008R2 (2008R2 is 64 bit), and with the same Firebird configuration: it was Firebird 2.5.2 64-bit, SuperServer, with increased page buffers and TempCacheSize. You can download this SuperServer configuration file for free from this location: http://ib-aid.com/en/optimized-firebird-configuration/

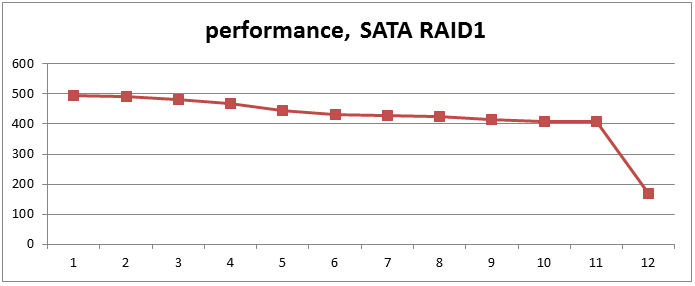

As a result, we had the following picture of database performance:

Figure 1. Performance degradation from 9Gb to 30Gb, and 1,7Tb

Point #11 is a performance mark for 30Gb database and #12 for 1.7 Terabyte database – the database size has grown 60 times (from 30Gb to 1813Gb), performance loss was 2.4 times (from 407 to 169 points).

So, the question is: can we improve Firebird performance on the same hardware with configuration tuning?

And the answer is: yes!

Supercharging FirebirdSQL

As you remember, in this test we run 20 simultaneous connections which perform intensive INSERTs and, less intensive, UPDATEs.

All SELECTs are short and well-defined, with effective SQL execution plans, so this is a typical OLTP (online transactions processing) application. For such applications the most critical thing for performance is parallel processing. Firebird SuperServer 2.5.2 is not suited well for multi-threading processing – it effectively uses only 1 core per database, and this fact makes SuperServer not very good choice for OLTP application.

So, we need to change Firebird architecture to Classic or SuperClassic, which support multi-threading processing and utilize multiple cores of CPU (there are 8 cores in CPU AMD-FX8350).

Then, some tuning needed, since default configuration for Firebird is not optimal for our test system.

Tuning parameters in firebird.conf

Page cache

So, in order to improve OLTP performance we decided to try Classic and SuperClassic architectures. One of the key parameters for Classic and SuperClassic is number of page buffers in cache. Unlike SuperServer, Classic and SuperClassic allocate page cache per connection.

For more details about Firebird architectures in 2.5 you can review this table this http://www.firebirdsql.org/file/fb25_architecture_comparison.pdf

There is an easy formula to calculate page cache memory usage for different Firebird Architectures:

- 1. SuperServer – single page cache per database. Default page cache size is 2048 pages, mutual recommendation is 10000 buffers. In our case cache was 16k (page size) x 10000 ~= 160Mb. It is for all connections.

- 2. Classic and SuperClassic – engine allocate page cache for each connection. Default size is 75 pages, so 16Kb (page size) x 75 = ~= 1.17 Mb, for each connection.

Obviously, page cache size should be increased, since 75 pages per connection is too low. We will show below results of several different values for page cache size.

LockHashSlots

Internally Firebird engine uses lock table to request and acquire locks for internal objects inside database, and for Classic and SuperClassic there is parameter LockHashSlots (default value is 1009). It should be increased under high load, to decrease hash chains in the lock table. Well, “high load” seems to be any real-world multi-user application, so we have set it to 30011 (for all tests).

LockMemSize

Parameter LockMemSize is used to setup initial lock table size (default value is 1048576). Engine can increase table size on demand. However, increase of lock table is expensive in terms of CPU and other resources, since it is performed through memory remapping. So we have set it to 7Mb, in order to save some time and CPU resources.

Test runs

We made several test runs with various values of page cache value, with the following results:

| Page buffers | Classic, test points | SuperClassic, test points |

| 256 | 299 | 372 |

| 512 | 371 | 359 |

| 768 | 362 | 386 |

| 1024 | 312 | 387 |

| 1500 | 390 | 392 |

| 2048 | 285 | 284 |

Table 1. Test runs for Classic and SuperClassic

As you can see, Classic and SuperClassic are much more effective than Firebird SuperServer for this task: test results improved from 169 points to 300-400, this is close to results we had for 30Gb database!

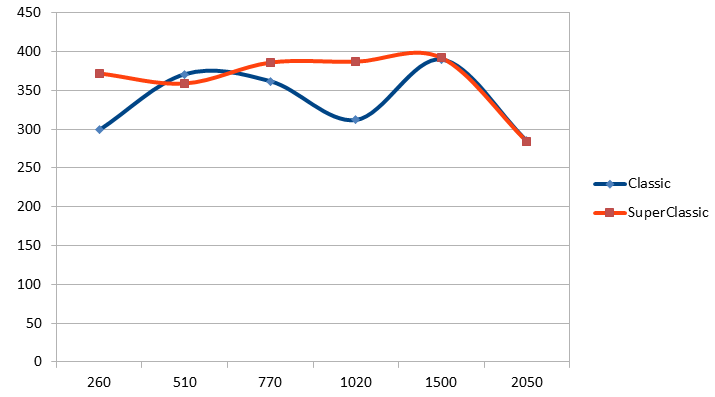

It's better to see results on the following graphic:

Figure 2. Test results for 1.7 Terabyte database

You can see that best performance (both at Classic and SuperClassic) was at 1500 pages per connection, so page cache per connection size was:

1500x16k ~= 23,4Mb

With 2000 pages per connection the performance decreased significantly. It seems that somewhere around 1500-2000 pages the advantage of caching became lower than overhead caused by lock table interactions between processes to synchronize pages in caches of each server process. Obviously, for higher number of connections this will happen earlier, so that's why Classic/SuperClassic servers are usually configured with numbers like 256-512 pages.

There is also drop of Classic performance around 768-1000 page caches – not sure why it happened.

Summary

Our experiments confirm that Firebird performance can be increased with correct selection of Firebird architecture (SuperServer, Classic or SuperClassic) and with an appropriate tuning of several important parameters for the specific architecture.

As a result, a huge 1.7 Tb Firebird SQL database can work on low-end hardware with a good enough performance. As a practical output from these tests, we have created several configuration files for all versions Firebird for all architectures. Of course, they are not tuned for the specific application and/or hardware, but they are better than default configuration files, which are made for very modest load.

Full set of optimized Firebird configuration files: http://ib-aid.com/en/optimized-firebird-configuration/

Feel free to ask any questions: [email protected]

What next?

We are working at comprehensive test which will compare Firebird 2.5 and Firebird 3.0 performance, with real-world simulation of load and huge number of connections. Stay tuned!

en

en